Introduction

Much is being written and discussed about Artificial Intelligence (AI). Some are very positive, while some are very doomsday-like. Some say it is the ultimate savior of humanity, while others say it will end civilization as we know it. It has been hailed as a force multiplier and ultimate timesaver. I take a more cautious approach and recommend that you do the same. I have nothing against AI technology when it is used correctly. I fear that people are rushing into this new technology without realizing its limitations and are oblivious to its dangers, both to their security and privacy.

For the record, I use AI chatbots a lot. I go between CoPilot, Claude, ChatGPT, and Le Chat to understand how they differ, where they shine, and where they fall short. Look for a specific blog article on that later in the year.

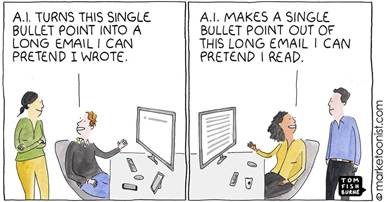

I use it as an assistant or an intern. I have it collect data for me, brainstorm layouts, etc. I double-check and validate all the facts and figures it gives me, check the citations it provides, and decide what to include and what to leave out.

I do not yet trust it with access to any of my systems or accounts.

With that preface, let’s dive into the details. (see references at the bottom)

Definitions

Before we dive into the details, let us discuss some key terms in the field of AI.

- Large Language Models (LLM): Algorithmic basis for chatbots and Generative AI (IBM, 2023) (Mearian, 2024) (Wikipedia, 2025)

- Chatbots: Applications on various websites that use AI and LLMs to interact with customers, attempting to answer their questions without human intervention.

- Artificial Narrow Intelligence, also known as Weak AI (what we refer to as Narrow AI), is the only type of AI that exists today. Any other form of AI is theoretical or science fiction. It can be trained to perform a single, narrow task, often (but not always) more efficiently and quickly than a human mind can. However, it is not capable of independent thought, nor can it exceed its training (IBM, 2023)

- Artificial general intelligence (AGI), also called general AI or strong AI, is a theoretical form of AI that can learn, think, and perform a wide range of tasks at a human level, including autonomously. The ultimate goal of AGI is to create machines that are capable of versatile, human-like intelligence, functioning as highly adaptable assistants in everyday life. (IBM, 2023) (Wikipedia, 2025)

- Super AI, also known as artificial superintelligence, is strictly theoretical, as is AGI. If ever realized, Super AI would think, reason, learn, make judgments, and possess cognitive abilities that surpass those of human beings. (IBM, 2023)

- Generative AI: A subtype of narrow AI specifically designed to fabricate text, images, or other content based on its LLM algorithm that predicts the words most related to the requested topic and what words to string together to form a coherent sentence. (Wikipedia, 2025)

Science fiction

As indicated above, anything other than narrow (aka weak) AI is strictly theoretical and science fiction at this point. So, any Terminator doom and gloom predictions are a little premature, as are helpful robots, as seen in The Jetsons. Salespeople and leaders from AI companies such as OpenAI, Anthropic, and Mistral claim that AGI will be available by 2027. The experts I listen to tend to disagree, saying that AGI will not see the light of day for at least 50 years, if ever, and that it would require breakthrough mathematics or some brand-new science not yet known to make AGI even remotely plausible. I side with the expert in thinking that science fiction AI is a few lifetimes away. Whether AI will be destructive or helpful remains to be seen and will largely depend on how society as a whole develops in the meantime. I believe it will be a force multiplier, magnifying societal norms.

I hope for a loving, caring, and cooperative society, which AGI will reflect.

Some LLMs are getting really good at interpreting vague instructions, and folks are hyping this up as autonomous, which it is not. The LLM is getting better programming and larger models, allowing it to interpret more.

In short, today’s LLMs are nothing more than a really advanced auto-complete. They take your instructions and apply statistical analysis to interpret what you want, then use the same statistical analysis to figure out what text makes the most sense. They are good at searching the internet and finding the right answer to your query; that right answer could be a blog post or a Reddit post from a know-it-all guy who, in fact, knows nothing about the topic. If I can’t find an answer it will simply make something up.

The Hype

As explained above, there is a lot of hype surrounding the field of Artificial Intelligence; so far, it’s mostly hype with little substance, just as the field of artificial intelligence is mostly artificial and little to no intelligence.

I haven’t seen the hype machine this strong and this busy since everyone was going gaga over NFTs. At least this time, there is substance behind the hype; it’s just not living up to the hype. It’s fabulous when it’s used right, and dangerous when used wrong.

Reality

The reality is that an LLM is nothing more than a really fancy statistical language model. A model that uses statistics to figure out connections between words. Narrow AI does not understand languages; it has a statistical model of how languages work.

Let us discuss what narrow artificial intelligence can do for us today, where it excels and where it falls short, along with some potential dangers to be aware of.

Dangers

Let us tackle the dangers first. As alluded to above, AI is no silver bullet; it must be trained for specific tasks, so naturally, it excels only in those particular areas. The most significant danger I see many folks fall into is thinking it is a magic solution that will solve everything, what I call the over-trust danger. It is kind of an “I’ve got a hammer, so everything is a nail” situation. Attempting to use AI for tasks it hasn’t been explicitly trained for will never end well, and the same applies to over-trusting any AI tool. Unquestioningly trusting an AI tool’s output will lead to disaster. Never use the output of an AI tool directly as an input into another tool without human supervision if you want to avoid problems.

Thinking it will always give you a factually correct answer can be fatal, figurative, and literally speaking.

There is a problem that has been labeled as hallucinations. However, there is debate about the accuracy of this term, as it implies cognitive abilities that AI cannot possess today. Whether we like the term ‘hallucinations’ or not, it refers to situations in which the chatbot generates a fictional answer with no basis in reality and presents it as authoritative and factual. As far as I know, this is a significant issue with all publicly available AI tools, which is why one should not trust any current AI tool without question; most of them include a disclaimer about this. This phenomenon has also been seen in summarized text, where text appears in the summary that is not present in the original, longer document.

How significant an issue it will be for you will vary greatly. The accuracy of the answer from an LLM is directly correlated to the amount of data on that subject that the LLM was trained on. If the only available data is some random guy’s post on Reddit, that will become the LLM’s source of fact. If it can’t find any source data, it will use its statistical model to estimate the most likely answer.

The other danger with Chatbots and other forms of AI is that all input is stored in the LLM for that bot. So, if you input proprietary information into a public chatbot to check grammar or spelling, summarize, or paraphrase, you have just publicly released that information, just as if you posted the document on a publicly available website. Even just asking a question about a sensitive topic can be a tipoff. It’s really scary how much it knows about you after using awhile. This applies regardless of the tool in question. Be cautious of Microsoft Copilot, Google Gemini, and similar tools when it comes to proprietary information. Any tool that claims to have built-in AI, which is practically every tool these days, should be viewed with skepticism.

To put this into context, if you are familiar with Reddit, StackExchange, and other forum-based social media or support sites, asking a question of an LLM has the same level of privacy as posting the question on those forums.

The only exception to this I know of is Proton Lumo, which explicitly does not learn from your interactions or store your questions outside your encrypted account. The downside to Proton Lumo is that it does not learn from user interactions, which can make it feel more limited.

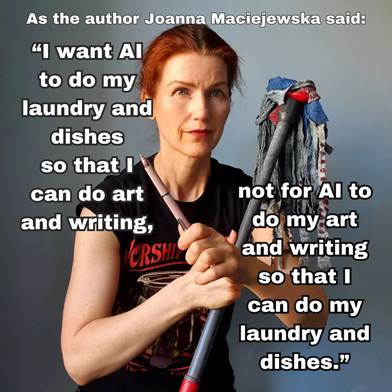

Where it shines

Chatbots and other forms of weak AI excel as advanced search engines, provided they are used in the same manner as traditional search engines are used today. This means you use the chatbot to find something for you; then, you review the result with critical thinking and prompt for more details as needed. Challenge the answer and ask probing questions, and you’ll quickly see how much factual information is behind the answer.

CoPilot is a very apt name for any sort of weak AI, as that is its best use case. Use it to help you get started, point you in the right direction, and inspire you, among other things. You are still in charge; it feeds you information, and you decide whether to use it. For example, if I need to write a policy statement, I instruct my favorite LLM to create a document for me, and it provides a template that I use as the basis for my document. I extensively rewrote it, but it serves as a great starting point and skeleton.

I once saw an analogy that I loved. Generative AI is like the college intern. It is excellent at collecting and correlating data, even writing the first draft. But you would never publish a document written by the intern without extensive editing first. You need to read over the document and question the intern about where the data came from, the assumptions they made, etc., as well as whether they were high on mushrooms while writing that document. They will, of course, never admit to being high, so you need to use your critical interviewing skills to determine that.

Chatbots are great at sounding confident and authoritative while giving a 100% wrong answer (see hallucinations above). If you are not critical of the answer or do not know better, you could be duped into believing misinformation.

Chatbots can be a valuable customer resource when integrated and trained on product documentation specific to a particular company. Especially if they are programmed to ask the customer if they need more help, and if the customer says yes, they can connect them to a human or automatically create a support request.

If you need a 1000-word fictional essay on a specific topic that does not really say much of anything, generative AI really shines at creating that sort of froth. Need placeholder text for a tool or website and want something better than Lorem Ipsum? Have your favorite chatbot generate some froth for you.

You need a product description for your online store that has the proper length to pass muster with SEO tools. LLMs are great at that. Just make sure to read it over and correct any factual inaccuracies.

If you need an impressionist picture, generative AI can whip up several stunningly beautiful pictures lickety-split. As long as it does not need to be accurate and you are okay with things like a human with three arms and six fingers on each arm, it can create some beautiful pictures for you. This is bound to improve over time, though.

Where it is lacking

If you are looking for factual and accurate information, you had better not trust it too much. It can be helpful if you are looking for a template to use as a base. Any instructions from any public chatbot should be taken with a massive grain of salt. If you do not have the knowledge or experience to tell if the answer is accurate, the safe bet is to assume it is inaccurate. As is the case with any content on the internet these days, use critical thinking and trust your gut.

I once did a test where I used Microsoft Copilot to guide me through setting up a syslog server with a graphical reporting engine, a very technical task that system administrators do frequently, and an experienced sysadmin can do this in 15-20 min or less. I had a test server to play with and followed Copilot’s instructions to the letter. I spent 8 hours going round and round, and at the end of the day, I had nothing to show for it. I would execute the instructions, get an error, and pass it back to Copilot. Each time, the answer was something along the lines of “sorry about that, I know exactly where we went wrong, here is how you fix it,” which didn’t fix it and just gave me a new error. Round and round we went. In this test, the incorrect instructions were harmless and only wasted my time. For other scenarios, this could have led to a significant business impact or worse.

Using generative AI to program or author articles is all the rage these days. I am proud to say that every single word in every blog article I have ever written was written by me, no AI involved in actual writing, just the research part. My philosophy is: why should anyone bother to read something I couldn’t be bothered to write?

Now, do not get me wrong, I am no AI hater, just pointing out the danger of this new tool. Helping folks understand that it is a tool and, used properly, can do wonders, but it is still a tool. Used incorrectly, it can be destructive. Going back to the hammer example, a hammer can build or destroy; it all depends on how you use it.

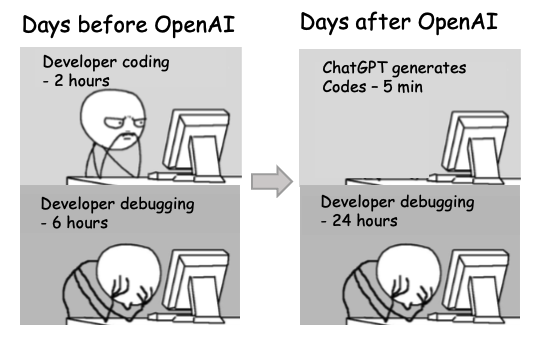

Coding with AI

The biggest hype these days is that anyone can code with AI. For some random reason, this brings to my mind the Infinite monkey theorem, which states “a monkey hitting keys independently and at random on a typewriter keyboard for an infinite amount of time will almost surely type any given text, including the complete works of William Shakespeare.”

Anyway, back to coding with AI, yes, one can have LLMs generate code for you, and the rate at which it compiles and even runs without errors is rapidly improving. To me, this discussion misses the point about what it takes to produce quality software. Just because it runs and appears to do what it should, does not mean it actually does.

Just like the text that has errors in it, so will the code it generates. The problem is that it’s much easier to find errors in the text than in the code.

If you developed an app without developers, chances are very high you did not bother with any app testing, except maybe a superficial “yep, it seems to work.” Some additional food for thought:

- What is your priority: lines of code written or quality of the code?

- How will you ensure the application produces 100% deterministic output? What happens if there is an error in the output?

- How do you plan to ensure the quality of your product?

- When bugs come up, who is going to fix them? Experience has shown that code produced by an LLM has a factor of magnitude more bugs than one written by a human. In my experience, LLMs cannot fix bugs; when I tried it, all I got was spaghetti. I am no expert here, though, so maybe I’m just not providing the right context or prompt.

- Are you skipping change control and peer review? A practice that has been recognized as a maturity indicator for decades and is required by ISO and other frameworks.

- Does security matter to you? Experience has shown that code produced by LLM tends to have many more security issues than traditional code. Even if your LLM has a built-in Static Application Security Testing (SAST) [looking at you, Claude], that’s only part of the solution. The Claude SAST tool is too new for us to know whether it is any good. There are dozens of SAST tools out there, and as any AppSec engineer can tell you, they are far from created equal.

Again, I’m not trying to dissuade you from using LLM in your development, just bringing up some food for thought and helping folks understand that if Joe in sales, with no prior programming experience, uses Claude code to create an app, you may not want to sell it without further scrutiny.

If you still enforce code review and change management, where a human developer reviews the code, understands it, and takes ownership of it, then you are doing OK. Just make sure to check all the includes and imports, as you would do for sources cited. Just like having a human developer code-review their own work won’t fly, having an LLM check code from another LLM (same or different model) doesn’t work either. You can have change requests automatically generated, but they need to be reviewed and approved by a human; otherwise, you defeat the point of change control.

There is also a lot of news these days about companies laying off staff and replacing them with AI. I predict this will not end well for them unless they are doing layoffs because they are overstaffed and trying to sound cool by blaming AI. What I think when I hear news like that is that the company doesn’t care about quality, and they will likely experience a security incident very soon.

An apt analogy is a cabinet factory that custom-made all its cabinets by hand. Then they bought machinery that would fabricate all the cabinets automatically and with no human involvement, so they laid off all their carpenters. Now the only human in the factory is the forklift operator who loads the trucks. Without a QA department, what would happen to product quality and customer satisfaction? Especially when the cabinet collapses and breaks all the customers’ stuff.

References

IBM. (2023, October 12). Understanding the different types of artificial intelligence. Retrieved from IBM: https://www.ibm.com/think/topics/artificial-intelligence-types

IBM. (2023, November 2). What are large language models (LLMs)? Retrieved from IBM: https://www.ibm.com/think/topics/large-language-models

Mearian, L. (2024, Feb 07). What are LLMs, and how are they used in generative AI? Retrieved from ComputerWorld: https://www.computerworld.com/article/1627101/what-are-large-language-models-and-how-are-they-used-in-generative-ai.html

Wikipedia. (2025, January 20). Artificial general intelligence. Retrieved from Wikipedia: https://en.wikipedia.org/wiki/Artificial_general_intelligence

Wikipedia. (2025, January 22). Generative artificial intelligence. Retrieved from Wikipedia: https://en.wikipedia.org/wiki/Generative_artificial_intelligence

Wikipedia. (2025, January 17). Large language model. Retrieved from Wikipedia: https://en.wikipedia.org/wiki/Large_language_model